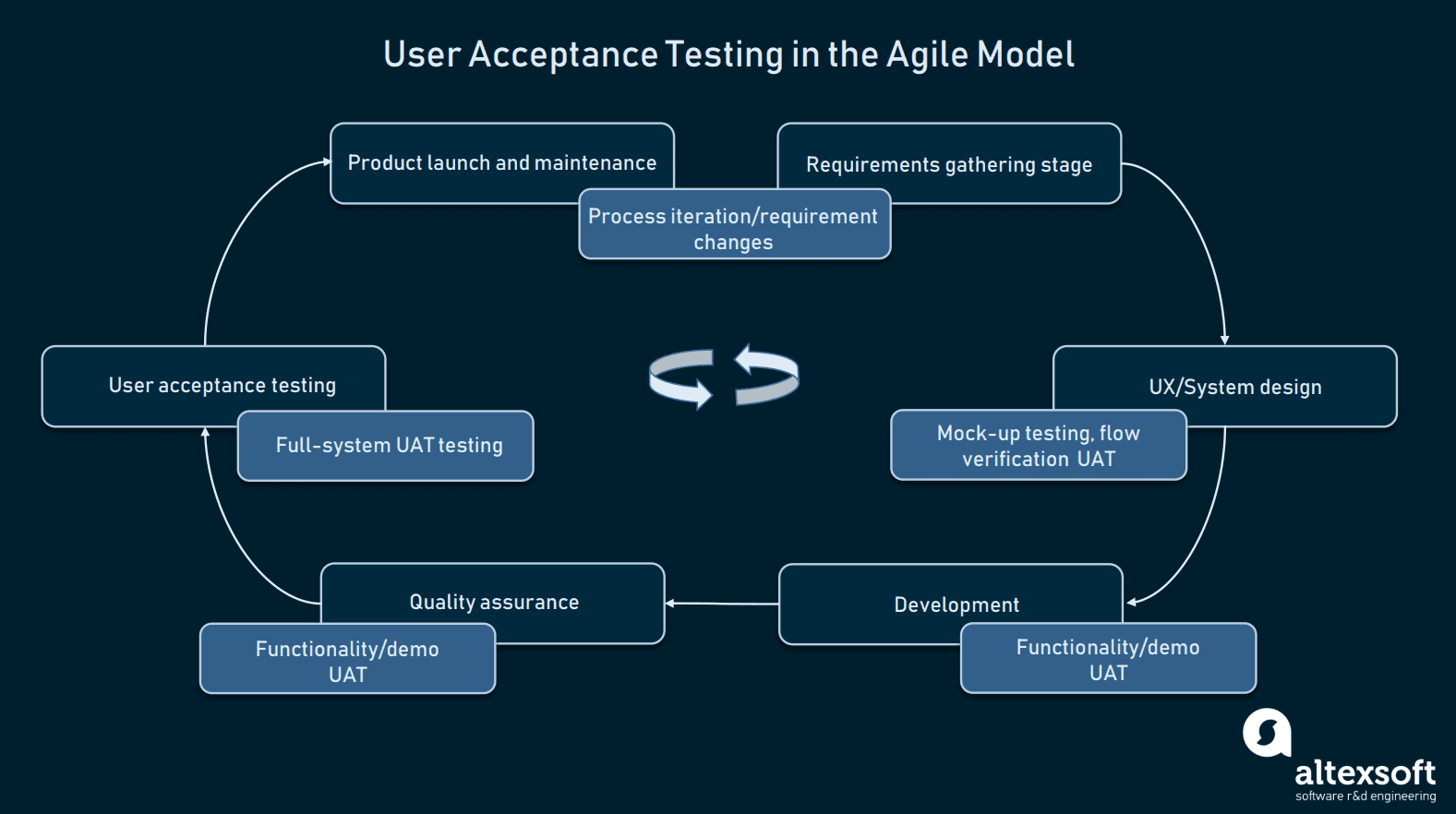

github.com/cosmos/cosmos-sdk@v0.50.10/CODING_GUIDELINES.md (about) 1 # Coding Guidelines 2 3 This document is an extension to [CONTRIBUTING](./CONTRIBUTING.md) and provides more details about the coding guidelines and requirements. 4 5 ## API & Design 6 7 * Code must be well structured: 8 * packages must have a limited responsibility (different concerns can go to different packages), 9 * types must be easy to compose, 10 * think about maintainbility and testability. 11 * "Depend upon abstractions, [not] concretions". 12 * Try to limit the number of methods you are exposing. It's easier to expose something later than to hide it. 13 * Take advantage of `internal` package concept. 14 * Follow agreed-upon design patterns and naming conventions. 15 * publicly-exposed functions are named logically, have forward-thinking arguments and return types. 16 * Avoid global variables and global configurators. 17 * Favor composable and extensible designs. 18 * Minimize code duplication. 19 * Limit third-party dependencies. 20 21 Performance: 22 23 * Avoid unnecessary operations or memory allocations. 24 25 Security: 26 27 * Pay proper attention to exploits involving: 28 * gas usage 29 * transaction verification and signatures 30 * malleability 31 * code must be always deterministic 32 * Thread safety. If some functionality is not thread-safe, or uses something that is not thread-safe, then clearly indicate the risk on each level. 33 34 ## Acceptance tests 35 36 Start the design by defining Acceptance Tests. The purpose of Acceptance Testing is to 37 validate that the product being developed corresponds to the needs of the real users 38 and is ready for launch. Hence we often talk about **User Acceptance Test** (UAT). 39 It also gives a better understanding of the product and helps designing a right interface 40 and API. 41 42 UAT should be revisited at each stage of the product development: 43 44  45 46 ### Why Acceptance Testing 47 48 * Automated acceptance tests catch serious problems that unit or component test suites could never catch. 49 * Automated acceptance tests deliver business value the users are expecting as they test user scenarios. 50 * Automated acceptance tests executed and passed on every build help improve the software delivery process. 51 * Testers, developers, and customers need to work closely to create suitable automated acceptance test suites. 52 53 ### How to define Acceptance Test 54 55 The best way to define AT is by starting from the user stories and think about all positive and negative scenarios a user can perform. 56 57 Product Developers should collaborate with stakeholders to define AT. Functional experts and business users are both needed for defining AT. 58 59 A good pattern for defining AT is listing scenarios with [GIVEN-WHEN-THEN](https://martinfowler.com/bliki/GivenWhenThen.html) format where: 60 61 * **GIVEN**: A set of initial circumstances (e.g. bank balance) 62 * **WHEN**: Some event happens (e.g. customer attempts a transfer) 63 * **THEN**: The expected result as per the defined behavior of the system 64 65 In other words: we define a use case input, current state and the expected outcome. Example: 66 67 > Feature: User trades stocks. 68 > Scenario: User requests a sell before close of trading 69 > 70 > Given I have 100 shares of MSFT stock 71 > And I have 150 shares of APPL stock 72 > And the time is before close of trading 73 > 74 > When I ask to sell 20 shares of MSFT stock 75 > 76 > Then I should have 80 shares of MSFT stock 77 > And I should have 150 shares of APPL stock 78 > And a sell order for 20 shares of MSFT stock should have been executed 79 80 *Reference: [writing acceptance tests](https://openclassrooms.com/en/courses/4544611-write-agile-documentation-user-stories-acceptance-tests/4810081-writing-acceptance-tests)*. 81 82 ### How and where to add acceptance tests 83 84 Acceptance tests are written in the Markdown format, using the scenario template described above, and be part of the specification (`xx_test.md` file in *spec* directory). Example: [`eco-credits/spec/06.test.md`](https://github.com/regen-network/regen-ledger/blob/7297783577e6cd102c5093365b573163680f36a1/x/ecocredit/spec/06_tests.md). 85 86 Acceptance tests should be defined during the design phase or at an early stage of development. Moreover, they should be defined before writing a module architecture - it will clarify the purpose and usage of the software. 87 Automated tests should cover all acceptance tests scenarios. 88 89 ## Automated Tests 90 91 Make sure your code is well tested: 92 93 * Provide unit tests for every unit of your code if possible. Unit tests are expected to comprise 70%-80% of your tests. 94 * Describe the test scenarios you are implementing for integration tests. 95 * Create integration tests for queries and msgs. 96 * Use both test cases and property / fuzzy testing. We use the [rapid](pgregory.net/rapid) Go library for property-based and fuzzy testing. 97 * Do not decrease code test coverage. Explain in a PR if test coverage is decreased. 98 99 We expect tests to use `require` or `assert` rather than `t.Skip` or `t.Fail`, 100 unless there is a reason to do otherwise. 101 When testing a function under a variety of different inputs, we prefer to use 102 [table driven tests](https://github.com/golang/go/wiki/TableDrivenTests). 103 Table driven test error messages should follow the following format 104 `<desc>, tc #<index>, i #<index>`. 105 `<desc>` is an optional short description of whats failing, `tc` is the 106 index within the test case table that is failing, and `i` is when there 107 is a loop, exactly which iteration of the loop failed. 108 The idea is you should be able to see the 109 error message and figure out exactly what failed. 110 Here is an example check: 111 112 ```go 113 <some table> 114 for tcIndex, tc := range cases { 115 <some code> 116 resp, err := doSomething() 117 require.NoError(err) 118 require.Equal(t, tc.expected, resp, "should correctly perform X") 119 ``` 120 121 ## Quality Assurance 122 123 We are forming a QA team that will support the core Cosmos SDK team and collaborators by: 124 125 * Improving the Cosmos SDK QA Processes 126 * Improving automation in QA and testing 127 * Defining high-quality metrics 128 * Maintaining and improving testing frameworks (unit tests, integration tests, and functional tests) 129 * Defining test scenarios. 130 * Verifying user experience and defining a high quality. 131 * We want to have **acceptance tests**! Document and list acceptance lists that are implemented and identify acceptance tests that are still missing. 132 * Acceptance tests should be specified in `acceptance-tests` directory as Markdown files. 133 * Supporting other teams with testing frameworks, automation, and User Experience testing. 134 * Testing chain upgrades for every new breaking change. 135 * Defining automated tests that assure data integrity after an update. 136 137 Desired outcomes: 138 139 * QA team works with Development Team. 140 * QA is happening in parallel with Core Cosmos SDK development. 141 * Releases are more predictable. 142 * QA reports. Goal is to guide with new tasks and be one of the QA measures. 143 144 As a developer, you must help the QA team by providing instructions for User Experience (UX) and functional testing. 145 146 ### QA Team to cross check Acceptance Tests 147 148 Once the AT are defined, the QA team will have an overview of the behavior a user can expect and: 149 150 * validate the user experience will be good 151 * validate the implementation conforms the acceptance tests 152 * by having a broader overview of the use cases, QA team should be able to define **test suites** and test data to efficiently automate Acceptance Tests and reuse the work.