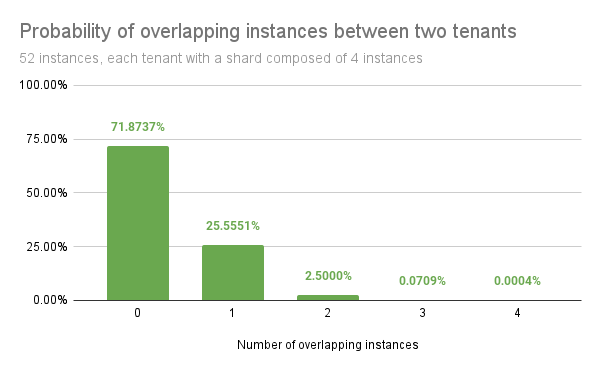

github.com/grafana/pyroscope@v1.18.0/docs/sources/configure-server/configure-shuffle-sharding/index.md (about) 1 --- 2 description: Learn how to configure shuffle sharding. 3 menuTitle: Shuffle sharding 4 title: Configure Grafana Pyroscope shuffle sharding 5 weight: 800 6 --- 7 8 # Configure Grafana Pyroscope shuffle sharding 9 10 Grafana Pyroscope leverages sharding to horizontally scale both single- and multi-tenant clusters beyond the capacity of a single node. 11 12 ## Background 13 14 Grafana Pyroscope uses a sharding strategy that distributes the workload across a subset of the instances that run a given component. 15 For example, on the write path, each tenant's series are sharded across a subset of the ingesters. 16 The size of this subset, which is the number of instances, is configured using the `shard size` parameter, which by default is `0`. 17 This default value means that each tenant uses all available instances, in order to fairly balance resources such as CPU and memory usage, and to maximize the usage of these resources across the cluster. 18 19 In a multi-tenant cluster this default (`0`) value introduces the following downsides: 20 21 - An outage affects all tenants. 22 - A misbehaving tenant, for example, a tenant that causes an out-of-memory error, can negatively affect all other tenants. 23 24 Configuring a shard size value higher than `0` enables shuffle sharding. The goal of shuffle sharding is to reduce the blast radius of an outage and better isolate tenants. 25 26 ## About shuffle sharding 27 28 Shuffle sharding is a technique that isolates different tenant's workloads and gives each tenant a single-tenant experience, even if they're running in a shared cluster. 29 For more information about how AWS describes shuffle sharding, refer to [What is shuffle sharding?](https://aws.amazon.com/builders-library/workload-isolation-using-shuffle-sharding/). 30 31 Shuffle sharding assigns each tenant a shard that is composed of a subset of the Grafana Pyroscope instances. 32 This technique minimizes the number of overlapping instances between two tenants. 33 Shuffle sharding provides the following benefits: 34 35 - An outage on some Grafana Pyroscope cluster instances or nodes only affect a subset of tenants. 36 - A misbehaving tenant only affects its shard instances. 37 Assuming that each tenant shard is relatively small compared to the total number of instances in the cluster, it’s likely that any other tenant runs on different instances or that only a subset of instances match the affected instances. 38 39 Using shuffle sharding doesn't require more resources, but can result in unbalanced instances. 40 41 ### Low overlapping instances probability 42 43 For example, in a Grafana Pyroscope cluster that runs 50 ingesters and assigns each tenant four out of 50 ingesters, by shuffling instances between each tenant, there are 230,000 possible combinations. 44 45 Randomly picking two tenants yields the following probabilities: 46 47 - 71% chance that they do not share any instance 48 - 26% chance that they share only 1 instance 49 - 2.7% chance that they share 2 instances 50 - 0.08% chance that they share 3 instances 51 - 0.0004% chance that their instances fully overlap 52 53  54 55 [//]: # "Diagram source of shuffle-sharding probability at https://docs.google.com/spreadsheets/d/1FXbiWTXi6bdERtamH-IfmpgFq1fNL4GP_KX_yJvbRi4/edit" 56 57 ## Grafana Pyroscope shuffle sharding 58 59 Grafana Pyroscope supports shuffle sharding in the following components: 60 61 - [Ingesters](#ingesters-shuffle-sharding) 62 - [Query-frontend / Query-scheduler](#query-frontend-and-query-scheduler-shuffle-sharding) 63 - [Store-gateway](#store-gateway-shuffle-sharding) 64 - [Compactor](#compactor-shuffle-sharding) 65 66 When you run Grafana Pyroscope with the default configuration, shuffle sharding is disabled and you need to explicitly enable it by increasing the shard size either globally or for a given tenant. 67 68 > **Note:** If the shard size value is equal to or higher than the number of available instances, for example where `-distributor.ingestion-tenant-shard-size` is higher than the number of ingesters, then shuffle sharding is disabled and all instances are used again. 69 70 ### Guaranteed properties 71 72 The Grafana Pyroscope shuffle sharding implementation provides the following benefits: 73 74 - **Stability**<br /> 75 Given a consistent state of the hash ring, the shuffle sharding algorithm always selects the same instances for a given tenant, even across different machines. 76 - **Consistency**<br /> 77 Adding or removing an instance from the hash ring leads to, at most, only one instance changed in each tenant's shard. 78 - **Shuffling**<br /> 79 Probabilistically and for a large enough cluster, shuffle sharding ensures that every tenant receives a different set of instances with a reduced number of overlapping instances between two tenants, which improves failure isolation. 80 81 ### Ingesters shuffle sharding 82 83 By default, the Grafana Pyroscope distributor divides the received series among all running ingesters. 84 85 When you enable ingester shuffle sharding, the distributor on the write path divide each tenant series among `-distributor.ingestion-tenant-shard-size` number of ingesters, while on the read path, the querier queries only the subset of ingesters that hold the series for a given tenant. 86 87 The shard size can be overridden on a per-tenant basis by setting `ingestion_tenant_shard_size` in the overrides section of the runtime configuration. 88 89 #### Ingesters write path 90 91 To enable shuffle sharding for ingesters on the write path, configure the following flags (or their respective YAML configuration options) on the distributor, ingester, and ruler: 92 93 - `-distributor.ingestion-tenant-shard-size=<size>`<br /> 94 `<size>`: Set the size to the number of ingesters each tenant series should be sharded to. If `<size>` is `0` or is greater than the number of available ingesters in the Grafana Pyroscope cluster, the tenant series are sharded across all ingesters. 95 96 #### Ingesters read path 97 98 Assuming that you have enabled shuffle sharding for the write path, to enable shuffle sharding for ingesters on the read path, configure the following flags (or their respective YAML configuration options) on the querier: 99 100 - `-distributor.ingestion-tenant-shard-size=<size>` 101 102 The following flag is set appropriately by default to enable shuffle sharding for ingesters on the read path. If you need to modify its defaults: 103 104 - `-querier.shuffle-sharding-ingesters-enabled=true`<br /> 105 Shuffle sharding for ingesters on the read path can be explicitly enabled or disabled. 106 - If shuffle sharding is enabled, queriers fetch in-memory series from the minimum set of required ingesters, selecting only ingesters which might have received series since now - `-blocks-storage.tsdb.retention-period`. Otherwise, the request is sent to all ingesters. 107 108 If you enable ingesters shuffle sharding only for the write path, queriers on the read path always query all ingesters instead of querying the subset of ingesters that belong to the tenant's shard. 109 Keeping ingesters shuffle sharding enabled only on the write path does not lead to incorrect query results, but might increase query latency. 110 111 #### Rollout strategy 112 113 If you’re running a Grafana Pyroscope cluster with shuffle sharding disabled, and you want to enable it for the ingesters, use the following rollout strategy to avoid missing querying for any series currently in the ingesters: 114 115 1. Explicitly disable ingesters shuffle-sharding on the read path via `-querier.shuffle-sharding-ingesters-enabled=false` since this is enabled by default. 116 1. Enable ingesters shuffle sharding on the write path. 117 1. Enable ingesters shuffle-sharding on the read path via `-querier.shuffle-sharding-ingesters-enabled=true`. 118 119 #### Limitation: Decreasing the tenant shard size 120 121 The current shuffle sharding implementation in Grafana Pyroscope has a limitation that prevents you from safely decreasing the tenant shard size when you enable ingesters’ shuffle sharding on the read path. 122 123 If a tenant’s shard decreases in size, there is currently no way for the queriers to know how large the tenant shard was previously, and as a result, they potentially miss an ingester with data for that tenant. 124 The blocks-storage.tsdb.retention-period, which is used to select the ingesters that might have received series since 'now - blocks-storage.tsdb.retention-period', doesn't work correctly for finding tenant shards if the tenant shard size is decreased. 125 126 Although decreasing the tenant shard size is not supported, consider the following workaround: 127 128 1. Disable shuffle sharding on the read path via `-querier.shuffle-sharding-ingesters-enabled=false`. 129 1. Decrease the configured tenant shard size. 130 1. Wait for at least the amount of time specified via `-blocks-storage.tsdb.retention-period`. 131 1. Re-enable shuffle sharding on the read path via `-querier.shuffle-sharding-ingesters-enabled=true`. 132 133 ### Query-frontend and query-scheduler shuffle sharding 134 135 By default, all Grafana Pyroscope queriers can execute queries for any tenant. 136 137 When you enable shuffle sharding by setting `-query-frontend.max-queriers-per-tenant` (or its respective YAML configuration option) to a value higher than `0` and lower than the number of available queriers, only the specified number of queriers are eligible to execute queries for a given tenant. 138 139 Note that this distribution happens in query-frontend, or query-scheduler, if used. 140 When using query-scheduler, the `-query-frontend.max-queriers-per-tenant` option must be set for the query-scheduler component. 141 When you don't use query-frontend (with or without query-scheduler), this option is not available. 142 143 You can override the maximum number of queriers on a per-tenant basis by setting `max_queriers_per_tenant` in the overrides section of the runtime configuration. 144 145 #### The impact of a "query of death" 146 147 In the event a tenant sends a "query of death" which causes a querier to crash, the crashed querier becomes disconnected from the query-frontend or query-scheduler, and another running querier is immediately assigned to the tenant's shard. 148 149 If the tenant repeatedly sends this query, the new querier assigned to the tenant's shard crashes as well, and yet another querier is assigned to the shard. 150 This cascading failure can potentially result in all running queriers to crash, one by one, which invalidates the assumption that shuffle sharding contains the blast radius of queries of death. 151 152 To mitigate this negative impact, there are experimental configuration options that enable you to configure a time delay between when a querier disconnects due to a crash and when the crashed querier is replaced by a healthy querier. 153 When you configure a time delay, a tenant that repeatedly sends a "query of death" runs with reduced querier capacity after a querier has crashed. 154 The tenant could end up having no available queriers, but this configuration reduces the likelihood that the crash impacts other tenants. 155 156 A delay of 1 minute might be a reasonable trade-off: 157 158 - Query-frontend: `-query-frontend.querier-forget-delay=1m` 159 - Query-scheduler: `-query-scheduler.querier-forget-delay=1m` 160 161 ### Store-gateway shuffle sharding 162 163 By default, a tenant's blocks are divided among all Grafana Pyroscope store-gateways. 164 165 When you enable store-gateway shuffle sharding by setting `-store-gateway.tenant-shard-size` (or its respective YAML configuration option) to a value higher than `0` and lower than the number of available store-gateways, only the specified number of store-gateways are eligible to load and query blocks for a given tenant. 166 You must set this flag on the store-gateway and querier. 167 168 You can override the store-gateway shard size on a per-tenant basis by setting `store_gateway_tenant_shard_size` in the overrides section of the runtime configuration. 169 170 For more information about the store-gateway, refer to [store-gateway](../../reference-pyroscope-architecture/components/store-gateway/). 171 172 ### Compactor shuffle sharding 173 174 By default, tenant blocks can be compacted by any Grafana Pyroscope compactor. 175 176 When you enable compactor shuffle sharding by setting `-compactor.compactor-tenant-shard-size` (or its respective YAML configuration option) to a value higher than `0` and lower than the number of available compactors, only the specified number of compactors are eligible to compact blocks for a given tenant. 177 178 You can override the compactor shard size on a per-tenant basis setting by `compactor_tenant_shard_size` in the overrides section of the runtime configuration. 179 180 ### Shuffle sharding impact to the KV store 181 182 Shuffle sharding does not add additional overhead to the KV store. 183 Shards are computed client-side and are not stored in the ring. 184 KV store sizing depends primarily on the number of replicas of any component that uses the ring, for example, ingesters, and the number of tokens per replica. 185 186 However, in some components, each tenant's shard is cached in-memory on the client-side, which might slightly increase their memory footprint. Increased memory footprint can happen mostly in the distributor.