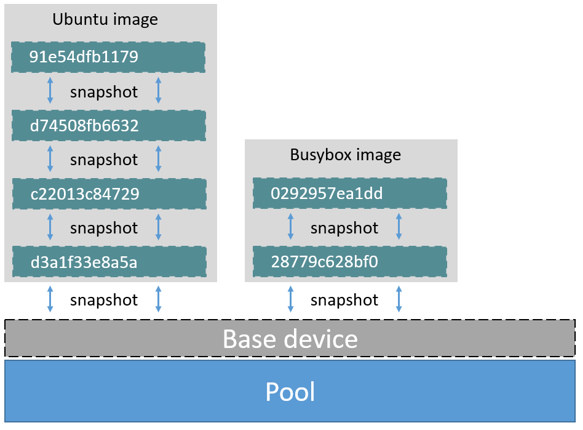

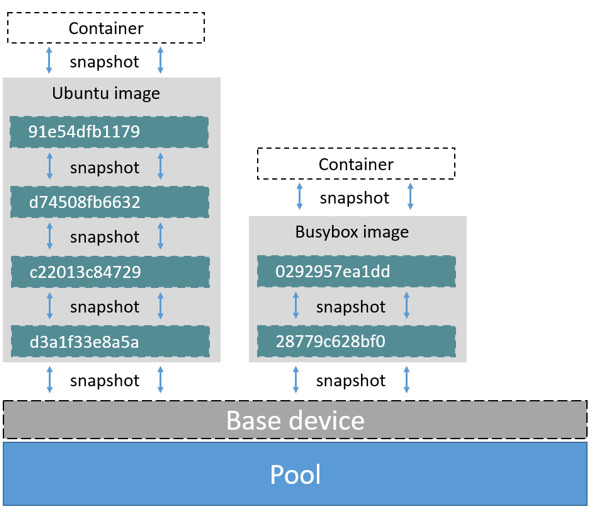

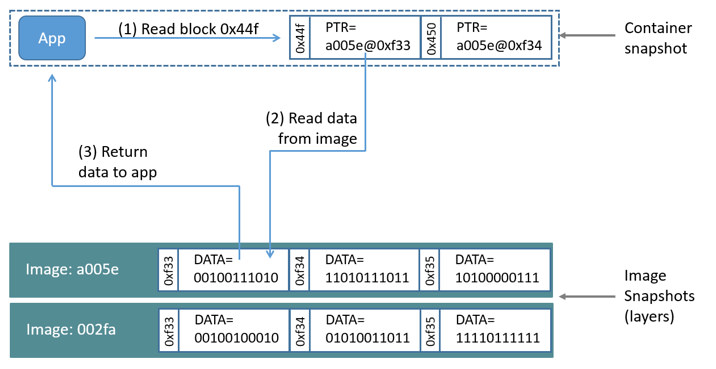

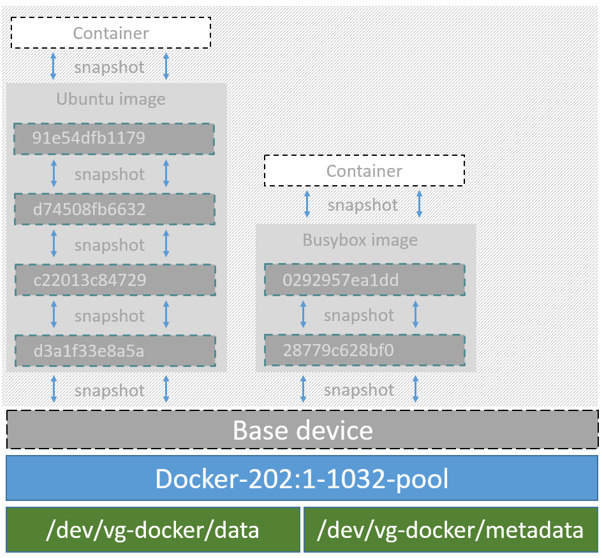

github.com/kobeld/docker@v1.12.0-rc1/docs/userguide/storagedriver/device-mapper-driver.md (about) 1 <!--[metadata]> 2 +++ 3 title="Device mapper storage in practice" 4 description="Learn how to optimize your use of device mapper driver." 5 keywords=["container, storage, driver, device mapper"] 6 [menu.main] 7 parent="engine_driver" 8 +++ 9 <![end-metadata]--> 10 11 # Docker and the Device Mapper storage driver 12 13 Device Mapper is a kernel-based framework that underpins many advanced 14 volume management technologies on Linux. Docker's `devicemapper` storage driver 15 leverages the thin provisioning and snapshotting capabilities of this framework 16 for image and container management. This article refers to the Device Mapper 17 storage driver as `devicemapper`, and the kernel framework as `Device Mapper`. 18 19 >**Note**: The [Commercially Supported Docker Engine (CS-Engine) running on RHEL 20 and CentOS Linux](https://www.docker.com/compatibility-maintenance) requires 21 that you use the `devicemapper` storage driver. 22 23 ## An alternative to AUFS 24 25 Docker originally ran on Ubuntu and Debian Linux and used AUFS for its storage 26 backend. As Docker became popular, many of the companies that wanted to use it 27 were using Red Hat Enterprise Linux (RHEL). Unfortunately, because the upstream 28 mainline Linux kernel did not include AUFS, RHEL did not use AUFS either. 29 30 To correct this Red Hat developers investigated getting AUFS into the mainline 31 kernel. Ultimately, though, they decided a better idea was to develop a new 32 storage backend. Moreover, they would base this new storage backend on existing 33 `Device Mapper` technology. 34 35 Red Hat collaborated with Docker Inc. to contribute this new driver. As a result 36 of this collaboration, Docker's Engine was re-engineered to make the storage 37 backend pluggable. So it was that the `devicemapper` became the second storage 38 driver Docker supported. 39 40 Device Mapper has been included in the mainline Linux kernel since version 41 2.6.9. It is a core part of RHEL family of Linux distributions. This means that 42 the `devicemapper` storage driver is based on stable code that has a lot of 43 real-world production deployments and strong community support. 44 45 46 ## Image layering and sharing 47 48 The `devicemapper` driver stores every image and container on its own virtual 49 device. These devices are thin-provisioned copy-on-write snapshot devices. 50 Device Mapper technology works at the block level rather than the file level. 51 This means that `devicemapper` storage driver's thin provisioning and 52 copy-on-write operations work with blocks rather than entire files. 53 54 >**Note**: Snapshots are also referred to as *thin devices* or *virtual 55 >devices*. They all mean the same thing in the context of the `devicemapper` 56 >storage driver. 57 58 With `devicemapper` the high level process for creating images is as follows: 59 60 1. The `devicemapper` storage driver creates a thin pool. 61 62 The pool is created from block devices or loop mounted sparse files (more 63 on this later). 64 65 2. Next it creates a *base device*. 66 67 A base device is a thin device with a filesystem. You can see which 68 filesystem is in use by running the `docker info` command and checking the 69 `Backing filesystem` value. 70 71 3. Each new image (and image layer) is a snapshot of this base device. 72 73 These are thin provisioned copy-on-write snapshots. This means that they 74 are initially empty and only consume space from the pool when data is written 75 to them. 76 77 With `devicemapper`, container layers are snapshots of the image they are 78 created from. Just as with images, container snapshots are thin provisioned 79 copy-on-write snapshots. The container snapshot stores all updates to the 80 container. The `devicemapper` allocates space to them on-demand from the pool 81 as and when data is written to the container. 82 83 The high level diagram below shows a thin pool with a base device and two 84 images. 85 86  87 88 If you look closely at the diagram you'll see that it's snapshots all the way 89 down. Each image layer is a snapshot of the layer below it. The lowest layer of 90 each image is a snapshot of the base device that exists in the pool. This 91 base device is a `Device Mapper` artifact and not a Docker image layer. 92 93 A container is a snapshot of the image it is created from. The diagram below 94 shows two containers - one based on the Ubuntu image and the other based on the 95 Busybox image. 96 97  98 99 100 ## Reads with the devicemapper 101 102 Let's look at how reads and writes occur using the `devicemapper` storage 103 driver. The diagram below shows the high level process for reading a single 104 block (`0x44f`) in an example container. 105 106  107 108 1. An application makes a read request for block `0x44f` in the container. 109 110 Because the container is a thin snapshot of an image it does not have the 111 data. Instead, it has a pointer (PTR) to where the data is stored in the image 112 snapshot lower down in the image stack. 113 114 2. The storage driver follows the pointer to block `0xf33` in the snapshot 115 relating to image layer `a005...`. 116 117 3. The `devicemapper` copies the contents of block `0xf33` from the image 118 snapshot to memory in the container. 119 120 4. The storage driver returns the data to the requesting application. 121 122 ## Write examples 123 124 With the `devicemapper` driver, writing new data to a container is accomplished 125 by an *allocate-on-demand* operation. Updating existing data uses a 126 copy-on-write operation. Because Device Mapper is a block-based technology 127 these operations occur at the block level. 128 129 For example, when making a small change to a large file in a container, the 130 `devicemapper` storage driver does not copy the entire file. It only copies the 131 blocks to be modified. Each block is 64KB. 132 133 ### Writing new data 134 135 To write 56KB of new data to a container: 136 137 1. An application makes a request to write 56KB of new data to the container. 138 139 2. The allocate-on-demand operation allocates a single new 64KB block to the 140 container's snapshot. 141 142 If the write operation is larger than 64KB, multiple new blocks are 143 allocated to the container's snapshot. 144 145 3. The data is written to the newly allocated block. 146 147 ### Overwriting existing data 148 149 To modify existing data for the first time: 150 151 1. An application makes a request to modify some data in the container. 152 153 2. A copy-on-write operation locates the blocks that need updating. 154 155 3. The operation allocates new empty blocks to the container snapshot and 156 copies the data into those blocks. 157 158 4. The modified data is written into the newly allocated blocks. 159 160 The application in the container is unaware of any of these 161 allocate-on-demand and copy-on-write operations. However, they may add latency 162 to the application's read and write operations. 163 164 ## Configure Docker with devicemapper 165 166 The `devicemapper` is the default Docker storage driver on some Linux 167 distributions. This includes RHEL and most of its forks. Currently, the 168 following distributions support the driver: 169 170 * RHEL/CentOS/Fedora 171 * Ubuntu 12.04 172 * Ubuntu 14.04 173 * Debian 174 175 Docker hosts running the `devicemapper` storage driver default to a 176 configuration mode known as `loop-lvm`. This mode uses sparse files to build 177 the thin pool used by image and container snapshots. The mode is designed to 178 work out-of-the-box with no additional configuration. However, production 179 deployments should not run under `loop-lvm` mode. 180 181 You can detect the mode by viewing the `docker info` command: 182 183 ```bash 184 $ sudo docker info 185 Containers: 0 186 Images: 0 187 Storage Driver: devicemapper 188 Pool Name: docker-202:2-25220302-pool 189 Pool Blocksize: 65.54 kB 190 Backing Filesystem: xfs 191 [...] 192 Data loop file: /var/lib/docker/devicemapper/devicemapper/data 193 Metadata loop file: /var/lib/docker/devicemapper/devicemapper/metadata 194 Library Version: 1.02.93-RHEL7 (2015-01-28) 195 [...] 196 ``` 197 198 The output above shows a Docker host running with the `devicemapper` storage 199 driver operating in `loop-lvm` mode. This is indicated by the fact that the 200 `Data loop file` and a `Metadata loop file` are on files under 201 `/var/lib/docker/devicemapper/devicemapper`. These are loopback mounted sparse 202 files. 203 204 ### Configure direct-lvm mode for production 205 206 The preferred configuration for production deployments is `direct-lvm`. This 207 mode uses block devices to create the thin pool. The following procedure shows 208 you how to configure a Docker host to use the `devicemapper` storage driver in 209 a `direct-lvm` configuration. 210 211 > **Caution:** If you have already run the Docker daemon on your Docker host 212 > and have images you want to keep, `push` them Docker Hub or your private 213 > Docker Trusted Registry before attempting this procedure. 214 215 The procedure below will create a logical volume configured as a thin pool to 216 use as backing for the storage pool. It assumes that you have a spare block 217 device at `/dev/xvdf` with enough free space to complete the task. The device 218 identifier and volume sizes may be be different in your environment and you 219 should substitute your own values throughout the procedure. The procedure also 220 assumes that the Docker daemon is in the `stopped` state. 221 222 1. Log in to the Docker host you want to configure and stop the Docker daemon. 223 224 2. Install the LVM2 package. 225 The LVM2 package includes the userspace toolset that provides logical volume 226 management facilities on linux. 227 228 3. Create a physical volume replacing `/dev/xvdf` with your block device. 229 230 ```bash 231 $ pvcreate /dev/xvdf 232 ``` 233 234 4. Create a 'docker' volume group. 235 236 ```bash 237 $ vgcreate docker /dev/xvdf 238 ``` 239 240 5. Create a thin pool named `thinpool`. 241 242 In this example, the data logical is 95% of the 'docker' volume group size. 243 Leaving this free space allows for auto expanding of either the data or 244 metadata if space runs low as a temporary stopgap. 245 246 ```bash 247 $ lvcreate --wipesignatures y -n thinpool docker -l 95%VG 248 $ lvcreate --wipesignatures y -n thinpoolmeta docker -l 1%VG 249 ``` 250 251 6. Convert the pool to a thin pool. 252 253 ```bash 254 $ lvconvert -y --zero n -c 512K --thinpool docker/thinpool --poolmetadata docker/thinpoolmeta 255 ``` 256 257 7. Configure autoextension of thin pools via an `lvm` profile. 258 259 ```bash 260 $ vi /etc/lvm/profile/docker-thinpool.profile 261 ``` 262 263 8. Specify 'thin_pool_autoextend_threshold' value. 264 265 The value should be the percentage of space used before `lvm` attempts 266 to autoextend the available space (100 = disabled). 267 268 ``` 269 thin_pool_autoextend_threshold = 80 270 ``` 271 272 9. Modify the `thin_pool_autoextend_percent` for when thin pool autoextension occurs. 273 274 The value's setting is the perentage of space to increase the thin pool (100 = 275 disabled) 276 277 ``` 278 thin_pool_autoextend_percent = 20 279 ``` 280 281 10. Check your work, your `docker-thinpool.profile` file should appear similar to the following: 282 283 An example `/etc/lvm/profile/docker-thinpool.profile` file: 284 285 ``` 286 activation { 287 thin_pool_autoextend_threshold=80 288 thin_pool_autoextend_percent=20 289 } 290 ``` 291 292 11. Apply your new lvm profile 293 294 ```bash 295 $ lvchange --metadataprofile docker-thinpool docker/thinpool 296 ``` 297 298 12. Verify the `lv` is monitored. 299 300 ```bash 301 $ lvs -o+seg_monitor 302 ``` 303 304 13. If the Docker daemon was previously started, clear your graph driver directory. 305 306 Clearing your graph driver removes any images, containers, and volumes in your 307 Docker installation. 308 309 ```bash 310 $ rm -rf /var/lib/docker/* 311 ``` 312 313 14. Configure the Docker daemon with specific devicemapper options. 314 315 There are two ways to do this. You can set options on the commmand line if you start the daemon there: 316 317 ```bash 318 --storage-driver=devicemapper --storage-opt=dm.thinpooldev=/dev/mapper/docker-thinpool --storage-opt dm.use_deferred_removal=true 319 ``` 320 321 You can also set them for startup in the `daemon.json` configuration, for example: 322 323 ```json 324 { 325 "storage-driver": "devicemapper", 326 "storage-opts": [ 327 "dm.thinpooldev=/dev/mapper/docker-thinpool", 328 "dm.use_deferred_removal=true" 329 ] 330 } 331 ``` 332 333 15. If using systemd and modifying the daemon configuration via unit or drop-in file, reload systemd to scan for changes. 334 335 ```bash 336 $ systemctl daemon-reload 337 ``` 338 339 16. Start the Docker daemon. 340 341 ```bash 342 $ systemctl start docker 343 ``` 344 345 After you start the Docker daemon, ensure you monitor your thin pool and volume 346 group free space. While the volume group will auto-extend, it can still fill 347 up. To monitor logical volumes, use `lvs` without options or `lvs -a` to see tha 348 data and metadata sizes. To monitor volume group free space, use the `vgs` command. 349 350 Logs can show the auto-extension of the thin pool when it hits the threshold, to 351 view the logs use: 352 353 ```bash 354 $ journalctl -fu dm-event.service 355 ``` 356 357 If you run into repeated problems with thin pool, you can use the 358 `dm.min_free_space` option to tune the Engine behavior. This value ensures that 359 operations fail with a warning when the free space is at or near the minimum. 360 For information, see <a 361 href="../../../reference/commandline/dockerd.md#storage-driver-options" 362 target="_blank">the storage driver options in the Engine daemon reference</a>. 363 364 365 ### Examine devicemapper structures on the host 366 367 You can use the `lsblk` command to see the device files created above and the 368 `pool` that the `devicemapper` storage driver creates on top of them. 369 370 ```bash 371 $ sudo lsblk 372 NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT 373 xvda 202:0 0 8G 0 disk 374 └─xvda1 202:1 0 8G 0 part / 375 xvdf 202:80 0 10G 0 disk 376 ├─vg--docker-data 253:0 0 90G 0 lvm 377 │ └─docker-202:1-1032-pool 253:2 0 10G 0 dm 378 └─vg--docker-metadata 253:1 0 4G 0 lvm 379 └─docker-202:1-1032-pool 253:2 0 10G 0 dm 380 ``` 381 382 The diagram below shows the image from prior examples updated with the detail 383 from the `lsblk` command above. 384 385  386 387 In the diagram, the pool is named `Docker-202:1-1032-pool` and spans the `data` 388 and `metadata` devices created earlier. The `devicemapper` constructs the pool 389 name as follows: 390 391 ``` 392 Docker-MAJ:MIN-INO-pool 393 ``` 394 395 `MAJ`, `MIN` and `INO` refer to the major and minor device numbers and inode. 396 397 Because Device Mapper operates at the block level it is more difficult to see 398 diffs between image layers and containers. Docker 1.10 and later no longer 399 matches image layer IDs with directory names in `/var/lib/docker`. However, 400 there are two key directories. The `/var/lib/docker/devicemapper/mnt` directory 401 contains the mount points for image and container layers. The 402 `/var/lib/docker/devicemapper/metadata`directory contains one file for every 403 image layer and container snapshot. The files contain metadata about each 404 snapshot in JSON format. 405 406 ## Increase capacity on a running device 407 408 You can increase the capacity of the pool on a running thin-pool device. This is 409 useful if the data's logical volume is full and the volume group is at full 410 capacity. 411 412 ### For a loop-lvm configuration 413 414 In this scenario, the thin pool is configured to use `loop-lvm` mode. To show 415 the specifics of the existing configuration use `docker info`: 416 417 ```bash 418 $ sudo docker info 419 Containers: 0 420 Running: 0 421 Paused: 0 422 Stopped: 0 423 Images: 2 424 Server Version: 1.11.0-rc2 425 Storage Driver: devicemapper 426 Pool Name: docker-8:1-123141-pool 427 Pool Blocksize: 65.54 kB 428 Base Device Size: 10.74 GB 429 Backing Filesystem: ext4 430 Data file: /dev/loop0 431 Metadata file: /dev/loop1 432 Data Space Used: 1.202 GB 433 Data Space Total: 107.4 GB 434 Data Space Available: 4.506 GB 435 Metadata Space Used: 1.729 MB 436 Metadata Space Total: 2.147 GB 437 Metadata Space Available: 2.146 GB 438 Udev Sync Supported: true 439 Deferred Removal Enabled: false 440 Deferred Deletion Enabled: false 441 Deferred Deleted Device Count: 0 442 Data loop file: /var/lib/docker/devicemapper/devicemapper/data 443 WARNING: Usage of loopback devices is strongly discouraged for production use. Either use `--storage-opt dm.thinpooldev` or use `--storage-opt dm.no_warn_on_loop_devices=true` to suppress this warning. 444 Metadata loop file: /var/lib/docker/devicemapper/devicemapper/metadata 445 Library Version: 1.02.90 (2014-09-01) 446 Logging Driver: json-file 447 [...] 448 ``` 449 450 The `Data Space` values show that the pool is 100GB total. This example extends the pool to 200GB. 451 452 1. List the sizes of the devices. 453 454 ```bash 455 $ sudo ls -lh /var/lib/docker/devicemapper/devicemapper/ 456 total 1175492 457 -rw------- 1 root root 100G Mar 30 05:22 data 458 -rw------- 1 root root 2.0G Mar 31 11:17 metadata 459 ``` 460 461 2. Truncate `data` file to the size of the `metadata` file (approximage 200GB). 462 463 ```bash 464 $ sudo truncate -s 214748364800 /var/lib/docker/devicemapper/devicemapper/data 465 ``` 466 467 3. Verify the file size changed. 468 469 ```bash 470 $ sudo ls -lh /var/lib/docker/devicemapper/devicemapper/ 471 total 1.2G 472 -rw------- 1 root root 200G Apr 14 08:47 data 473 -rw------- 1 root root 2.0G Apr 19 13:27 metadata 474 ``` 475 476 4. Reload data loop device 477 478 ```bash 479 $ sudo blockdev --getsize64 /dev/loop0 480 107374182400 481 $ sudo losetup -c /dev/loop0 482 $ sudo blockdev --getsize64 /dev/loop0 483 214748364800 484 ``` 485 486 5. Reload devicemapper thin pool. 487 488 a. Get the pool name first. 489 490 ```bash 491 $ sudo dmsetup status | grep pool 492 docker-8:1-123141-pool: 0 209715200 thin-pool 91 493 422/524288 18338/1638400 - rw discard_passdown queue_if_no_space - 494 ``` 495 496 The name is the string before the colon. 497 498 b. Dump the device mapper table first. 499 500 ```bash 501 $ sudo dmsetup table docker-8:1-123141-pool 502 0 209715200 thin-pool 7:1 7:0 128 32768 1 skip_block_zeroing 503 ``` 504 505 c. Calculate the real total sectors of the thin pool now. 506 507 Change the second number of the table info (i.e. the disk end sector) to 508 reflect the new number of 512 byte sectors in the disk. For example, as the 509 new loop size is 200GB, change the second number to 419430400. 510 511 512 d. Reload the thin pool with the new sector number 513 514 ```bash 515 $ sudo dmsetup suspend docker-8:1-123141-pool \ 516 && sudo dmsetup reload docker-8:1-123141-pool --table '0 419430400 thin-pool 7:1 7:0 128 32768 1 skip_block_zeroing' \ 517 && sudo dmsetup resume docker-8:1-123141-pool 518 ``` 519 520 #### The device_tool 521 522 The Docker's projects `contrib` directory contains not part of the core 523 distribution. These tools that are often useful but can also be out-of-date. <a 524 href="https://goo.gl/wNfDTi">In this directory, is the `device_tool.go`</a> 525 which you can also resize the loop-lvm thin pool. 526 527 To use the tool, compile it first. Then, do the following to resize the pool: 528 529 ```bash 530 $ ./device_tool resize 200GB 531 ``` 532 533 ### For a direct-lvm mode configuration 534 535 In this example, you extend the capacity of a running device that uses the 536 `direct-lvm` configuration. This example assumes you are using the `/dev/sdh1` 537 disk partition. 538 539 1. Extend the volume group (VG) `vg-docker`. 540 541 ```bash 542 $ sudo vgextend vg-docker /dev/sdh1 543 Volume group "vg-docker" successfully extended 544 ``` 545 546 Your volume group may use a different name. 547 548 2. Extend the `data` logical volume(LV) `vg-docker/data` 549 550 ```bash 551 $ sudo lvextend -l+100%FREE -n vg-docker/data 552 Extending logical volume data to 200 GiB 553 Logical volume data successfully resized 554 ``` 555 556 3. Reload devicemapper thin pool. 557 558 a. Get the pool name. 559 560 ```bash 561 $ sudo dmsetup status | grep pool 562 docker-253:17-1835016-pool: 0 96460800 thin-pool 51593 6270/1048576 701943/753600 - rw no_discard_passdown queue_if_no_space 563 ``` 564 565 The name is the string before the colon. 566 567 b. Dump the device mapper table. 568 569 ```bash 570 $ sudo dmsetup table docker-253:17-1835016-pool 571 0 96460800 thin-pool 252:0 252:1 128 32768 1 skip_block_zeroing 572 ``` 573 574 c. Calculate the real total sectors of the thin pool now. we can use `blockdev` to get the real size of data lv. 575 576 Change the second number of the table info (i.e. the number of sectors) to 577 reflect the new number of 512 byte sectors in the disk. For example, as the 578 new data `lv` size is `264132100096` bytes, change the second number to 579 `515883008`. 580 581 ```bash 582 $ sudo blockdev --getsize64 /dev/vg-docker/data 583 264132100096 584 ``` 585 586 d. Then reload the thin pool with the new sector number. 587 588 ```bash 589 $ sudo dmsetup suspend docker-253:17-1835016-pool \ 590 && sudo dmsetup reload docker-253:17-1835016-pool --table '0 515883008 thin-pool 252:0 252:1 128 32768 1 skip_block_zeroing' \ 591 && sudo dmsetup resume docker-253:17-1835016-pool 592 ``` 593 594 ## Device Mapper and Docker performance 595 596 It is important to understand the impact that allocate-on-demand and 597 copy-on-write operations can have on overall container performance. 598 599 ### Allocate-on-demand performance impact 600 601 The `devicemapper` storage driver allocates new blocks to a container via an 602 allocate-on-demand operation. This means that each time an app writes to 603 somewhere new inside a container, one or more empty blocks has to be located 604 from the pool and mapped into the container. 605 606 All blocks are 64KB. A write that uses less than 64KB still results in a single 607 64KB block being allocated. Writing more than 64KB of data uses multiple 64KB 608 blocks. This can impact container performance, especially in containers that 609 perform lots of small writes. However, once a block is allocated to a container 610 subsequent reads and writes can operate directly on that block. 611 612 ### Copy-on-write performance impact 613 614 Each time a container updates existing data for the first time, the 615 `devicemapper` storage driver has to perform a copy-on-write operation. This 616 copies the data from the image snapshot to the container's snapshot. This 617 process can have a noticeable impact on container performance. 618 619 All copy-on-write operations have a 64KB granularity. As a results, updating 620 32KB of a 1GB file causes the driver to copy a single 64KB block into the 621 container's snapshot. This has obvious performance advantages over file-level 622 copy-on-write operations which would require copying the entire 1GB file into 623 the container layer. 624 625 In practice, however, containers that perform lots of small block writes 626 (<64KB) can perform worse with `devicemapper` than with AUFS. 627 628 ### Other device mapper performance considerations 629 630 There are several other things that impact the performance of the 631 `devicemapper` storage driver. 632 633 - **The mode.** The default mode for Docker running the `devicemapper` storage 634 driver is `loop-lvm`. This mode uses sparse files and suffers from poor 635 performance. It is **not recommended for production**. The recommended mode for 636 production environments is `direct-lvm` where the storage driver writes 637 directly to raw block devices. 638 639 - **High speed storage.** For best performance you should place the `Data file` 640 and `Metadata file` on high speed storage such as SSD. This can be direct 641 attached storage or from a SAN or NAS array. 642 643 - **Memory usage.** `devicemapper` is not the most memory efficient Docker 644 storage driver. Launching *n* copies of the same container loads *n* copies of 645 its files into memory. This can have a memory impact on your Docker host. As a 646 result, the `devicemapper` storage driver may not be the best choice for PaaS 647 and other high density use cases. 648 649 One final point, data volumes provide the best and most predictable 650 performance. This is because they bypass the storage driver and do not incur 651 any of the potential overheads introduced by thin provisioning and 652 copy-on-write. For this reason, you should to place heavy write workloads on 653 data volumes. 654 655 ## Related Information 656 657 * [Understand images, containers, and storage drivers](imagesandcontainers.md) 658 * [Select a storage driver](selectadriver.md) 659 * [AUFS storage driver in practice](aufs-driver.md) 660 * [Btrfs storage driver in practice](btrfs-driver.md) 661 * [daemon reference](../../reference/commandline/dockerd.md#storage-driver-options)