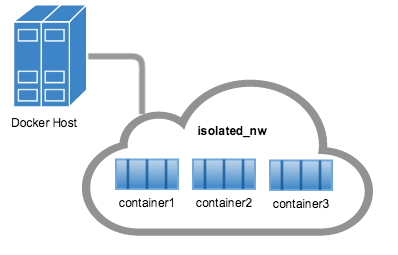

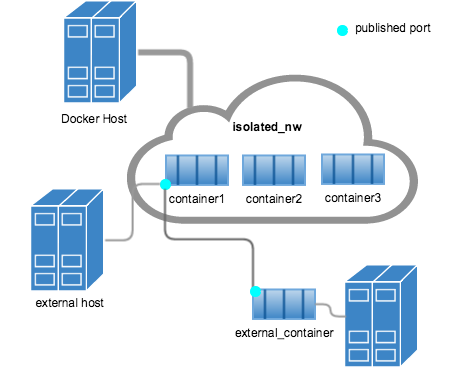

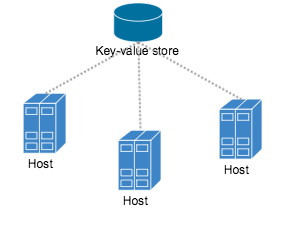

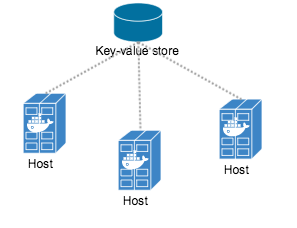

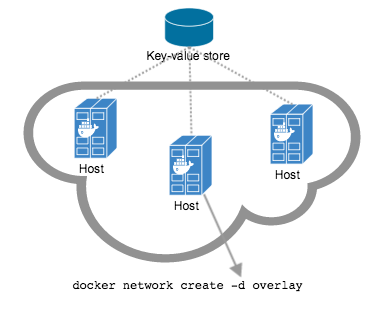

github.com/vieux/docker@v0.6.3-0.20161004191708-e097c2a938c7/docs/userguide/networking/index.md (about) 1 <!--[metadata]> 2 +++ 3 aliases=[ 4 "/engine/userguide/networking/dockernetworks/" 5 ] 6 title = "Docker container networking" 7 description = "How do we connect docker containers within and across hosts ?" 8 keywords = ["Examples, Usage, network, docker, documentation, user guide, multihost, cluster"] 9 [menu.main] 10 identifier="networking_index" 11 parent = "smn_networking" 12 weight = -5 13 +++ 14 <![end-metadata]--> 15 16 # Understand Docker container networks 17 18 This section provides an overview of the default networking behavior that Docker 19 Engine delivers natively. It describes the type of networks created by default 20 and how to create your own, user-defined networks. It also describes the 21 resources required to create networks on a single host or across a cluster of 22 hosts. 23 24 ## Default Networks 25 26 When you install Docker, it creates three networks automatically. You can list 27 these networks using the `docker network ls` command: 28 29 ``` 30 $ docker network ls 31 32 NETWORK ID NAME DRIVER 33 7fca4eb8c647 bridge bridge 34 9f904ee27bf5 none null 35 cf03ee007fb4 host host 36 ``` 37 38 Historically, these three networks are part of Docker's implementation. When 39 you run a container you can use the `--network` flag to specify which network you 40 want to run a container on. These three networks are still available to you. 41 42 The `bridge` network represents the `docker0` network present in all Docker 43 installations. Unless you specify otherwise with the `docker run 44 --network=<NETWORK>` option, the Docker daemon connects containers to this network 45 by default. You can see this bridge as part of a host's network stack by using 46 the `ifconfig` command on the host. 47 48 ``` 49 $ ifconfig 50 51 docker0 Link encap:Ethernet HWaddr 02:42:47:bc:3a:eb 52 inet addr:172.17.0.1 Bcast:0.0.0.0 Mask:255.255.0.0 53 inet6 addr: fe80::42:47ff:febc:3aeb/64 Scope:Link 54 UP BROADCAST RUNNING MULTICAST MTU:9001 Metric:1 55 RX packets:17 errors:0 dropped:0 overruns:0 frame:0 56 TX packets:8 errors:0 dropped:0 overruns:0 carrier:0 57 collisions:0 txqueuelen:0 58 RX bytes:1100 (1.1 KB) TX bytes:648 (648.0 B) 59 ``` 60 61 The `none` network adds a container to a container-specific network stack. That container lacks a network interface. Attaching to such a container and looking at its stack you see this: 62 63 ``` 64 $ docker attach nonenetcontainer 65 66 root@0cb243cd1293:/# cat /etc/hosts 67 127.0.0.1 localhost 68 ::1 localhost ip6-localhost ip6-loopback 69 fe00::0 ip6-localnet 70 ff00::0 ip6-mcastprefix 71 ff02::1 ip6-allnodes 72 ff02::2 ip6-allrouters 73 root@0cb243cd1293:/# ifconfig 74 lo Link encap:Local Loopback 75 inet addr:127.0.0.1 Mask:255.0.0.0 76 inet6 addr: ::1/128 Scope:Host 77 UP LOOPBACK RUNNING MTU:65536 Metric:1 78 RX packets:0 errors:0 dropped:0 overruns:0 frame:0 79 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 80 collisions:0 txqueuelen:0 81 RX bytes:0 (0.0 B) TX bytes:0 (0.0 B) 82 83 root@0cb243cd1293:/# 84 ``` 85 >**Note**: You can detach from the container and leave it running with `CTRL-p CTRL-q`. 86 87 The `host` network adds a container on the hosts network stack. You'll find the 88 network configuration inside the container is identical to the host. 89 90 With the exception of the `bridge` network, you really don't need to 91 interact with these default networks. While you can list and inspect them, you 92 cannot remove them. They are required by your Docker installation. However, you 93 can add your own user-defined networks and these you can remove when you no 94 longer need them. Before you learn more about creating your own networks, it is 95 worth looking at the default `bridge` network a bit. 96 97 98 ### The default bridge network in detail 99 The default `bridge` network is present on all Docker hosts. The `docker network inspect` 100 command returns information about a network: 101 102 ``` 103 $ docker network inspect bridge 104 105 [ 106 { 107 "Name": "bridge", 108 "Id": "f7ab26d71dbd6f557852c7156ae0574bbf62c42f539b50c8ebde0f728a253b6f", 109 "Scope": "local", 110 "Driver": "bridge", 111 "IPAM": { 112 "Driver": "default", 113 "Config": [ 114 { 115 "Subnet": "172.17.0.1/16", 116 "Gateway": "172.17.0.1" 117 } 118 ] 119 }, 120 "Containers": {}, 121 "Options": { 122 "com.docker.network.bridge.default_bridge": "true", 123 "com.docker.network.bridge.enable_icc": "true", 124 "com.docker.network.bridge.enable_ip_masquerade": "true", 125 "com.docker.network.bridge.host_binding_ipv4": "0.0.0.0", 126 "com.docker.network.bridge.name": "docker0", 127 "com.docker.network.driver.mtu": "9001" 128 }, 129 "Labels": {} 130 } 131 ] 132 ``` 133 The Engine automatically creates a `Subnet` and `Gateway` to the network. 134 The `docker run` command automatically adds new containers to this network. 135 136 ``` 137 $ docker run -itd --name=container1 busybox 138 139 3386a527aa08b37ea9232cbcace2d2458d49f44bb05a6b775fba7ddd40d8f92c 140 141 $ docker run -itd --name=container2 busybox 142 143 94447ca479852d29aeddca75c28f7104df3c3196d7b6d83061879e339946805c 144 ``` 145 146 Inspecting the `bridge` network again after starting two containers shows both newly launched containers in the network. Their ids show up in the "Containers" section of `docker network inspect`: 147 148 ``` 149 $ docker network inspect bridge 150 151 {[ 152 { 153 "Name": "bridge", 154 "Id": "f7ab26d71dbd6f557852c7156ae0574bbf62c42f539b50c8ebde0f728a253b6f", 155 "Scope": "local", 156 "Driver": "bridge", 157 "IPAM": { 158 "Driver": "default", 159 "Config": [ 160 { 161 "Subnet": "172.17.0.1/16", 162 "Gateway": "172.17.0.1" 163 } 164 ] 165 }, 166 "Containers": { 167 "3386a527aa08b37ea9232cbcace2d2458d49f44bb05a6b775fba7ddd40d8f92c": { 168 "EndpointID": "647c12443e91faf0fd508b6edfe59c30b642abb60dfab890b4bdccee38750bc1", 169 "MacAddress": "02:42:ac:11:00:02", 170 "IPv4Address": "172.17.0.2/16", 171 "IPv6Address": "" 172 }, 173 "94447ca479852d29aeddca75c28f7104df3c3196d7b6d83061879e339946805c": { 174 "EndpointID": "b047d090f446ac49747d3c37d63e4307be745876db7f0ceef7b311cbba615f48", 175 "MacAddress": "02:42:ac:11:00:03", 176 "IPv4Address": "172.17.0.3/16", 177 "IPv6Address": "" 178 } 179 }, 180 "Options": { 181 "com.docker.network.bridge.default_bridge": "true", 182 "com.docker.network.bridge.enable_icc": "true", 183 "com.docker.network.bridge.enable_ip_masquerade": "true", 184 "com.docker.network.bridge.host_binding_ipv4": "0.0.0.0", 185 "com.docker.network.bridge.name": "docker0", 186 "com.docker.network.driver.mtu": "9001" 187 }, 188 "Labels": {} 189 } 190 ] 191 ``` 192 193 The `docker network inspect` command above shows all the connected containers and their network resources on a given network. Containers in this default network are able to communicate with each other using IP addresses. Docker does not support automatic service discovery on the default bridge network. If you want to communicate with container names in this default bridge network, you must connect the containers via the legacy `docker run --link` option. 194 195 You can `attach` to a running `container` and investigate its configuration: 196 197 ``` 198 $ docker attach container1 199 200 root@0cb243cd1293:/# ifconfig 201 eth0 Link encap:Ethernet HWaddr 02:42:AC:11:00:02 202 inet addr:172.17.0.2 Bcast:0.0.0.0 Mask:255.255.0.0 203 inet6 addr: fe80::42:acff:fe11:2/64 Scope:Link 204 UP BROADCAST RUNNING MULTICAST MTU:9001 Metric:1 205 RX packets:16 errors:0 dropped:0 overruns:0 frame:0 206 TX packets:8 errors:0 dropped:0 overruns:0 carrier:0 207 collisions:0 txqueuelen:0 208 RX bytes:1296 (1.2 KiB) TX bytes:648 (648.0 B) 209 210 lo Link encap:Local Loopback 211 inet addr:127.0.0.1 Mask:255.0.0.0 212 inet6 addr: ::1/128 Scope:Host 213 UP LOOPBACK RUNNING MTU:65536 Metric:1 214 RX packets:0 errors:0 dropped:0 overruns:0 frame:0 215 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 216 collisions:0 txqueuelen:0 217 RX bytes:0 (0.0 B) TX bytes:0 (0.0 B) 218 ``` 219 220 Then use `ping`to send three ICMP requests and test the connectivity of the 221 containers on this `bridge` network. 222 223 ``` 224 root@0cb243cd1293:/# ping -w3 172.17.0.3 225 226 PING 172.17.0.3 (172.17.0.3): 56 data bytes 227 64 bytes from 172.17.0.3: seq=0 ttl=64 time=0.096 ms 228 64 bytes from 172.17.0.3: seq=1 ttl=64 time=0.080 ms 229 64 bytes from 172.17.0.3: seq=2 ttl=64 time=0.074 ms 230 231 --- 172.17.0.3 ping statistics --- 232 3 packets transmitted, 3 packets received, 0% packet loss 233 round-trip min/avg/max = 0.074/0.083/0.096 ms 234 ``` 235 236 Finally, use the `cat` command to check the `container1` network configuration: 237 238 ``` 239 root@0cb243cd1293:/# cat /etc/hosts 240 241 172.17.0.2 3386a527aa08 242 127.0.0.1 localhost 243 ::1 localhost ip6-localhost ip6-loopback 244 fe00::0 ip6-localnet 245 ff00::0 ip6-mcastprefix 246 ff02::1 ip6-allnodes 247 ff02::2 ip6-allrouters 248 ``` 249 To detach from a `container1` and leave it running use `CTRL-p CTRL-q`.Then, attach to `container2` and repeat these three commands. 250 251 ``` 252 $ docker attach container2 253 254 root@0cb243cd1293:/# ifconfig 255 eth0 Link encap:Ethernet HWaddr 02:42:AC:11:00:03 256 inet addr:172.17.0.3 Bcast:0.0.0.0 Mask:255.255.0.0 257 inet6 addr: fe80::42:acff:fe11:3/64 Scope:Link 258 UP BROADCAST RUNNING MULTICAST MTU:9001 Metric:1 259 RX packets:15 errors:0 dropped:0 overruns:0 frame:0 260 TX packets:13 errors:0 dropped:0 overruns:0 carrier:0 261 collisions:0 txqueuelen:0 262 RX bytes:1166 (1.1 KiB) TX bytes:1026 (1.0 KiB) 263 264 lo Link encap:Local Loopback 265 inet addr:127.0.0.1 Mask:255.0.0.0 266 inet6 addr: ::1/128 Scope:Host 267 UP LOOPBACK RUNNING MTU:65536 Metric:1 268 RX packets:0 errors:0 dropped:0 overruns:0 frame:0 269 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 270 collisions:0 txqueuelen:0 271 RX bytes:0 (0.0 B) TX bytes:0 (0.0 B) 272 273 root@0cb243cd1293:/# ping -w3 172.17.0.2 274 275 PING 172.17.0.2 (172.17.0.2): 56 data bytes 276 64 bytes from 172.17.0.2: seq=0 ttl=64 time=0.067 ms 277 64 bytes from 172.17.0.2: seq=1 ttl=64 time=0.075 ms 278 64 bytes from 172.17.0.2: seq=2 ttl=64 time=0.072 ms 279 280 --- 172.17.0.2 ping statistics --- 281 3 packets transmitted, 3 packets received, 0% packet loss 282 round-trip min/avg/max = 0.067/0.071/0.075 ms 283 / # cat /etc/hosts 284 172.17.0.3 94447ca47985 285 127.0.0.1 localhost 286 ::1 localhost ip6-localhost ip6-loopback 287 fe00::0 ip6-localnet 288 ff00::0 ip6-mcastprefix 289 ff02::1 ip6-allnodes 290 ff02::2 ip6-allrouters 291 ``` 292 293 The default `docker0` bridge network supports the use of port mapping and `docker run --link` to allow communications between containers in the `docker0` network. These techniques are cumbersome to set up and prone to error. While they are still available to you as techniques, it is better to avoid them and define your own bridge networks instead. 294 295 ## User-defined networks 296 297 You can create your own user-defined networks that better isolate containers. 298 Docker provides some default **network drivers** for creating these networks. 299 You can create a new **bridge network**, **overlay network** or **MACVLAN 300 network**. You can also create a **network plugin** or **remote network** 301 written to your own specifications. 302 303 You can create multiple networks. You can add containers to more than one 304 network. Containers can only communicate within networks but not across 305 networks. A container attached to two networks can communicate with member 306 containers in either network. When a container is connected to multiple 307 networks, its external connectivity is provided via the first non-internal 308 network, in lexical order. 309 310 The next few sections describe each of Docker's built-in network drivers in 311 greater detail. 312 313 ### A bridge network 314 315 The easiest user-defined network to create is a `bridge` network. This network 316 is similar to the historical, default `docker0` network. There are some added 317 features and some old features that aren't available. 318 319 ``` 320 $ docker network create --driver bridge isolated_nw 321 1196a4c5af43a21ae38ef34515b6af19236a3fc48122cf585e3f3054d509679b 322 323 $ docker network inspect isolated_nw 324 325 [ 326 { 327 "Name": "isolated_nw", 328 "Id": "1196a4c5af43a21ae38ef34515b6af19236a3fc48122cf585e3f3054d509679b", 329 "Scope": "local", 330 "Driver": "bridge", 331 "IPAM": { 332 "Driver": "default", 333 "Config": [ 334 { 335 "Subnet": "172.21.0.0/16", 336 "Gateway": "172.21.0.1/16" 337 } 338 ] 339 }, 340 "Containers": {}, 341 "Options": {}, 342 "Labels": {} 343 } 344 ] 345 346 $ docker network ls 347 348 NETWORK ID NAME DRIVER 349 9f904ee27bf5 none null 350 cf03ee007fb4 host host 351 7fca4eb8c647 bridge bridge 352 c5ee82f76de3 isolated_nw bridge 353 354 ``` 355 356 After you create the network, you can launch containers on it using the `docker run --network=<NETWORK>` option. 357 358 ``` 359 $ docker run --network=isolated_nw -itd --name=container3 busybox 360 361 8c1a0a5be480921d669a073393ade66a3fc49933f08bcc5515b37b8144f6d47c 362 363 $ docker network inspect isolated_nw 364 [ 365 { 366 "Name": "isolated_nw", 367 "Id": "1196a4c5af43a21ae38ef34515b6af19236a3fc48122cf585e3f3054d509679b", 368 "Scope": "local", 369 "Driver": "bridge", 370 "IPAM": { 371 "Driver": "default", 372 "Config": [ 373 {} 374 ] 375 }, 376 "Containers": { 377 "8c1a0a5be480921d669a073393ade66a3fc49933f08bcc5515b37b8144f6d47c": { 378 "EndpointID": "93b2db4a9b9a997beb912d28bcfc117f7b0eb924ff91d48cfa251d473e6a9b08", 379 "MacAddress": "02:42:ac:15:00:02", 380 "IPv4Address": "172.21.0.2/16", 381 "IPv6Address": "" 382 } 383 }, 384 "Options": {}, 385 "Labels": {} 386 } 387 ] 388 ``` 389 390 The containers you launch into this network must reside on the same Docker host. 391 Each container in the network can immediately communicate with other containers 392 in the network. Though, the network itself isolates the containers from external 393 networks. 394 395  396 397 Within a user-defined bridge network, linking is not supported. You can 398 expose and publish container ports on containers in this network. This is useful 399 if you want to make a portion of the `bridge` network available to an outside 400 network. 401 402  403 404 A bridge network is useful in cases where you want to run a relatively small 405 network on a single host. You can, however, create significantly larger networks 406 by creating an `overlay` network. 407 408 409 ### An overlay network with Docker Engine swarm mode 410 411 You can create an overlay network on a manager node running in swarm mode 412 without an external key-value store. The swarm makes the overlay network 413 available only to nodes in the swarm that require it for a service. When you 414 create a service that uses the overlay network, the manager node automatically 415 extends the overlay network to nodes that run service tasks. 416 417 To learn more about running Docker Engine in swarm mode, refer to the 418 [Swarm mode overview](../../swarm/index.md). 419 420 The example below shows how to create a network and use it for a service from a manager node in the swarm: 421 422 ```bash 423 # Create an overlay network `my-multi-host-network`. 424 $ docker network create \ 425 --driver overlay \ 426 --subnet 10.0.9.0/24 \ 427 my-multi-host-network 428 429 400g6bwzd68jizzdx5pgyoe95 430 431 # Create an nginx service and extend the my-multi-host-network to nodes where 432 # the service's tasks run. 433 $ docker service create --replicas 2 --network my-multi-host-network --name my-web nginx 434 435 716thylsndqma81j6kkkb5aus 436 ``` 437 438 Overlay networks for a swarm are not available to containers started with 439 `docker run` that don't run as part of a swarm mode service. For more 440 information refer to [Docker swarm mode overlay network security model](overlay-security-model.md). 441 442 See also [Attach services to an overlay network](../../swarm/networking.md). 443 444 ### An overlay network with an external key-value store 445 446 If you are not using Docker Engine in swarm mode, the `overlay` network requires 447 a valid key-value store service. Supported key-value stores include Consul, 448 Etcd, and ZooKeeper (Distributed store). Before creating a network on this 449 version of the Engine, you must install and configure your chosen key-value 450 store service. The Docker hosts that you intend to network and the service must 451 be able to communicate. 452 453 >**Note:** Docker Engine running in swarm mode is not compatible with networking 454 with an external key-value store. 455 456  457 458 Each host in the network must run a Docker Engine instance. The easiest way to 459 provision the hosts is with Docker Machine. 460 461  462 463 You should open the following ports between each of your hosts. 464 465 | Protocol | Port | Description | 466 |----------|------|-----------------------| 467 | udp | 4789 | Data plane (VXLAN) | 468 | tcp/udp | 7946 | Control plane | 469 470 Your key-value store service may require additional ports. 471 Check your vendor's documentation and open any required ports. 472 473 Once you have several machines provisioned, you can use Docker Swarm to quickly 474 form them into a swarm which includes a discovery service as well. 475 476 To create an overlay network, you configure options on the `daemon` on each 477 Docker Engine for use with `overlay` network. There are three options to set: 478 479 <table> 480 <thead> 481 <tr> 482 <th>Option</th> 483 <th>Description</th> 484 </tr> 485 </thead> 486 <tbody> 487 <tr> 488 <td><pre>--cluster-store=PROVIDER://URL</pre></td> 489 <td>Describes the location of the KV service.</td> 490 </tr> 491 <tr> 492 <td><pre>--cluster-advertise=HOST_IP|HOST_IFACE:PORT</pre></td> 493 <td>The IP address or interface of the HOST used for clustering.</td> 494 </tr> 495 <tr> 496 <td><pre>--cluster-store-opt=KEY-VALUE OPTIONS</pre></td> 497 <td>Options such as TLS certificate or tuning discovery Timers</td> 498 </tr> 499 </tbody> 500 </table> 501 502 Create an `overlay` network on one of the machines in the swarm. 503 504 $ docker network create --driver overlay my-multi-host-network 505 506 This results in a single network spanning multiple hosts. An `overlay` network 507 provides complete isolation for the containers. 508 509  510 511 Then, on each host, launch containers making sure to specify the network name. 512 513 $ docker run -itd --network=my-multi-host-network busybox 514 515 Once connected, each container has access to all the containers in the network 516 regardless of which Docker host the container was launched on. 517 518  519 520 If you would like to try this for yourself, see the [Getting started for 521 overlay](get-started-overlay.md). 522 523 ### Custom network plugin 524 525 If you like, you can write your own network driver plugin. A network 526 driver plugin makes use of Docker's plugin infrastructure. In this 527 infrastructure, a plugin is a process running on the same Docker host as the 528 Docker `daemon`. 529 530 Network plugins follow the same restrictions and installation rules as other 531 plugins. All plugins make use of the plugin API. They have a lifecycle that 532 encompasses installation, starting, stopping and activation. 533 534 Once you have created and installed a custom network driver, you use it like the 535 built-in network drivers. For example: 536 537 $ docker network create --driver weave mynet 538 539 You can inspect it, add containers to and from it, and so forth. Of course, 540 different plugins may make use of different technologies or frameworks. Custom 541 networks can include features not present in Docker's default networks. For more 542 information on writing plugins, see [Extending Docker](../../extend/legacy_plugins.md) and 543 [Writing a network driver plugin](../../extend/plugins_network.md). 544 545 ### Docker embedded DNS server 546 547 Docker daemon runs an embedded DNS server to provide automatic service discovery 548 for containers connected to user defined networks. Name resolution requests from 549 the containers are handled first by the embedded DNS server. If the embedded DNS 550 server is unable to resolve the request it will be forwarded to any external DNS 551 servers configured for the container. To facilitate this when the container is 552 created, only the embedded DNS server reachable at `127.0.0.11` will be listed 553 in the container's `resolv.conf` file. More information on embedded DNS server on 554 user-defined networks can be found in the [embedded DNS server in user-defined networks] 555 (configure-dns.md) 556 557 ## Links 558 559 Before the Docker network feature, you could use the Docker link feature to 560 allow containers to discover each other. With the introduction of Docker networks, 561 containers can be discovered by its name automatically. But you can still create 562 links but they behave differently when used in the default `docker0` bridge network 563 compared to user-defined networks. For more information, please refer to 564 [Legacy Links](default_network/dockerlinks.md) for link feature in default `bridge` network 565 and the [linking containers in user-defined networks](work-with-networks.md#linking-containers-in-user-defined-networks) for links 566 functionality in user-defined networks. 567 568 ## Related information 569 570 - [Work with network commands](work-with-networks.md) 571 - [Get started with multi-host networking](get-started-overlay.md) 572 - [Managing Data in Containers](../../tutorials/dockervolumes.md) 573 - [Docker Machine overview](https://docs.docker.com/machine) 574 - [Docker Swarm overview](https://docs.docker.com/swarm) 575 - [Investigate the LibNetwork project](https://github.com/docker/libnetwork)