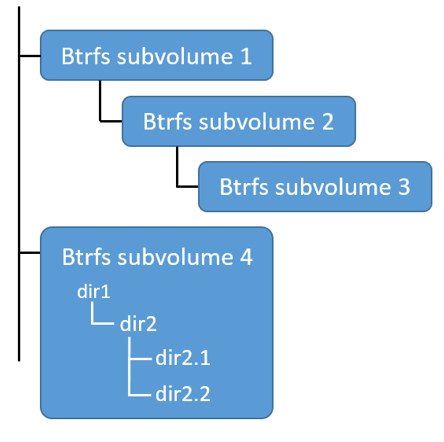

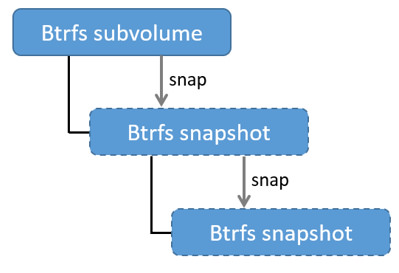

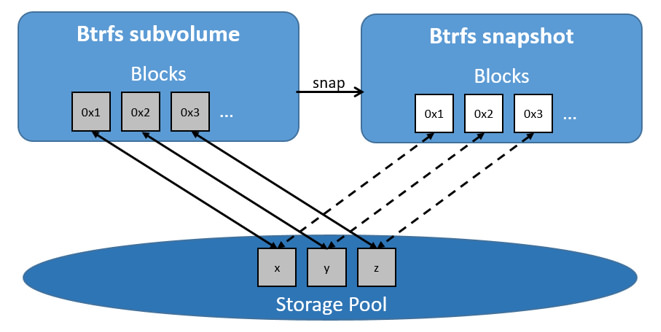

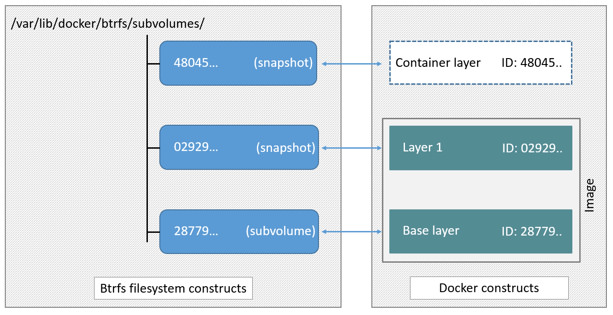

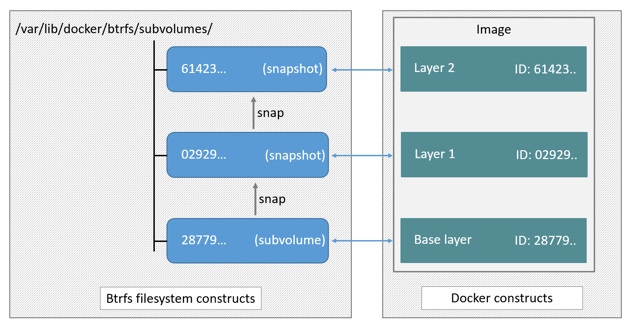

github.com/walkingsparrow/docker@v1.4.2-0.20151218153551-b708a2249bfa/docs/userguide/storagedriver/btrfs-driver.md (about) 1 <!--[metadata]> 2 +++ 3 title = "Btrfs storage in practice" 4 description = "Learn how to optimize your use of Btrfs driver." 5 keywords = ["container, storage, driver, Btrfs "] 6 [menu.main] 7 parent = "mn_storage_docker" 8 +++ 9 <![end-metadata]--> 10 11 # Docker and Btrfs in practice 12 13 Btrfs is a next generation copy-on-write filesystem that supports many advanced 14 storage technologies that make it a good fit for Docker. Btrfs is included in 15 the mainline Linux kernel and it's on-disk-format is now considered stable. 16 However, many of its features are still under heavy development and users should 17 consider it a fast-moving target. 18 19 Docker's `btrfs` storage driver leverages many Btrfs features for image and 20 container management. Among these features are thin provisioning, copy-on-write, 21 and snapshotting. 22 23 This article refers to Docker's Btrfs storage driver as `btrfs` and the overall Btrfs Filesystem as Btrfs. 24 25 >**Note**: The [Commercially Supported Docker Engine (CS-Engine)](https://www.docker.com/compatibility-maintenance) does not currently support the `btrfs` storage driver. 26 27 ## The future of Btrfs 28 29 Btrfs has been long hailed as the future of Linux filesystems. With full support in the mainline Linux kernel, a stable on-disk-format, and active development with a focus on stability, this is now becoming more of a reality. 30 31 As far as Docker on the Linux platform goes, many people see the `btrfs` storage driver as a potential long-term replacement for the `devicemapper` storage driver. However, at the time of writing, the `devicemapper` storage driver should be considered safer, more stable, and more *production ready*. You should only consider the `btrfs` driver for production deployments if you understand it well and have existing experience with Btrfs. 32 33 ## Image layering and sharing with Btrfs 34 35 Docker leverages Btrfs *subvolumes* and *snapshots* for managing the on-disk components of image and container layers. Btrfs subvolumes look and feel like a normal Unix filesystem. As such, they can have their own internal directory structure that hooks into the wider Unix filesystem. 36 37 Subvolumes are natively copy-on-write and have space allocated to them on-demand 38 from an underlying storage pool. They can also be nested and snapped. The 39 diagram blow shows 4 subvolumes. 'Subvolume 2' and 'Subvolume 3' are nested, 40 whereas 'Subvolume 4' shows its own internal directory tree. 41 42  43 44 Snapshots are a point-in-time read-write copy of an entire subvolume. They exist directly below the subvolume they were created from. You can create snapshots of snapshots as shown in the diagram below. 45 46  47 48 Btfs allocates space to subvolumes and snapshots on demand from an underlying pool of storage. The unit of allocation is referred to as a *chunk* and *chunks* are normally ~1GB in size. 49 50 Snapshots are first-class citizens in a Btrfs filesystem. This means that they look, feel, and operate just like regular subvolumes. The technology required to create them is built directly into the Btrfs filesystem thanks to its native copy-on-write design. This means that Btrfs snapshots are space efficient with little or no performance overhead. The diagram below shows a subvolume and it's snapshot sharing the same data. 51 52  53 54 Docker's `btrfs` storage driver stores every image layer and container in its own Btrfs subvolume or snapshot. The base layer of an image is stored as a subvolume whereas child image layers and containers are stored as snapshots. This is shown in the diagram below. 55 56  57 58 The high level process for creating images and containers on Docker hosts running the `btrfs` driver is as follows: 59 60 1. The image's base layer is stored in a Btrfs subvolume under 61 `/var/lib/docker/btrfs/subvolumes`. 62 63 The image ID is used as the subvolume name. E.g., a base layer with image ID 64 "f9a9f253f6105141e0f8e091a6bcdb19e3f27af949842db93acba9048ed2410b" will be 65 stored in 66 `/var/lib/docker/btrfs/subvolumes/f9a9f253f6105141e0f8e091a6bcdb19e3f27af949842db93acba9048ed2410b` 67 68 2. Subsequent image layers are stored as a Btrfs snapshot of the parent layer's subvolume or snapshot. 69 70 The diagram below shows a three-layer image. The base layer is a subvolume. Layer 1 is a snapshot of the base layer's subvolume. Layer 2 is a snapshot of Layer 1's snapshot. 71 72  73 74 ## Image and container on-disk constructs 75 76 Image layers and containers are visible in the Docker host's filesystem at 77 `/var/lib/docker/btrfs/subvolumes/<image-id> OR <container-id>`. Directories for 78 containers are present even for containers with a stopped status. This is 79 because the `btrfs` storage driver mounts a default, top-level subvolume at 80 `/var/lib/docker/subvolumes`. All other subvolumes and snapshots exist below 81 that as Btrfs filesystem objects and not as individual mounts. 82 83 The following example shows a single Docker image with four image layers. 84 85 ```bash 86 $ sudo docker images -a 87 REPOSITORY TAG IMAGE ID CREATED VIRTUAL SIZE 88 ubuntu latest 0a17decee413 2 weeks ago 188.3 MB 89 <none> <none> 3c9a9d7cc6a2 2 weeks ago 188.3 MB 90 <none> <none> eeb7cb91b09d 2 weeks ago 188.3 MB 91 <none> <none> f9a9f253f610 2 weeks ago 188.1 MB 92 ``` 93 94 Each image layer exists as a Btrfs subvolume or snapshot with the same name as it's image ID as illustrated by the `btrfs subvolume list` command shown below: 95 96 ```bash 97 $ sudo btrfs subvolume list /var/lib/docker 98 ID 257 gen 9 top level 5 path btrfs/subvolumes/f9a9f253f6105141e0f8e091a6bcdb19e3f27af949842db93acba9048ed2410b 99 ID 258 gen 10 top level 5 path btrfs/subvolumes/eeb7cb91b09d5de9edb2798301aeedf50848eacc2123e98538f9d014f80f243c 100 ID 260 gen 11 top level 5 path btrfs/subvolumes/3c9a9d7cc6a235eb2de58ca9ef3551c67ae42a991933ba4958d207b29142902b 101 ID 261 gen 12 top level 5 path btrfs/subvolumes/0a17decee4139b0de68478f149cc16346f5e711c5ae3bb969895f22dd6723751 102 ``` 103 104 Under the `/var/lib/docker/btrfs/subvolumes` directory, each of these subvolumes and snapshots are visible as a normal Unix directory: 105 106 ```bash 107 $ ls -l /var/lib/docker/btrfs/subvolumes/ 108 total 0 109 drwxr-xr-x 1 root root 132 Oct 16 14:44 0a17decee4139b0de68478f149cc16346f5e711c5ae3bb969895f22dd6723751 110 drwxr-xr-x 1 root root 132 Oct 16 14:44 3c9a9d7cc6a235eb2de58ca9ef3551c67ae42a991933ba4958d207b29142902b 111 drwxr-xr-x 1 root root 132 Oct 16 14:44 eeb7cb91b09d5de9edb2798301aeedf50848eacc2123e98538f9d014f80f243c 112 drwxr-xr-x 1 root root 132 Oct 16 14:44 f9a9f253f6105141e0f8e091a6bcdb19e3f27af949842db93acba9048ed2410b 113 ``` 114 115 Because Btrfs works at the filesystem level and not the block level, each image 116 and container layer can be browsed in the filesystem using normal Unix commands. 117 The example below shows a truncated output of an `ls -l` command against the 118 image's top layer: 119 120 ```bash 121 $ ls -l /var/lib/docker/btrfs/subvolumes/0a17decee4139b0de68478f149cc16346f5e711c5ae3bb969895f22dd6723751/ 122 total 0 123 drwxr-xr-x 1 root root 1372 Oct 9 08:39 bin 124 drwxr-xr-x 1 root root 0 Apr 10 2014 boot 125 drwxr-xr-x 1 root root 882 Oct 9 08:38 dev 126 drwxr-xr-x 1 root root 2040 Oct 12 17:27 etc 127 drwxr-xr-x 1 root root 0 Apr 10 2014 home 128 ...output truncated... 129 ``` 130 131 ## Container reads and writes with Btrfs 132 133 A container is a space-efficient snapshot of an image. Metadata in the snapshot 134 points to the actual data blocks in the storage pool. This is the same as with a 135 subvolume. Therefore, reads performed against a snapshot are essentially the 136 same as reads performed against a subvolume. As a result, no performance 137 overhead is incurred from the Btrfs driver. 138 139 Writing a new file to a container invokes an allocate-on-demand operation to 140 allocate new data block to the container's snapshot. The file is then written to 141 this new space. The allocate-on-demand operation is native to all writes with 142 Btrfs and is the same as writing new data to a subvolume. As a result, writing 143 new files to a container's snapshot operate at native Btrfs speeds. 144 145 Updating an existing file in a container causes a copy-on-write operation 146 (technically *redirect-on-write*). The driver leaves the original data and 147 allocates new space to the snapshot. The updated data is written to this new 148 space. Then, the driver updates the filesystem metadata in the snapshot to point 149 to this new data. The original data is preserved in-place for subvolumes and 150 snapshots further up the tree. This behavior is native to copy-on-write 151 filesystems like Btrfs and incurs very little overhead. 152 153 With Btfs, writing and updating lots of small files can result in slow performance. More on this later. 154 155 ## Configuring Docker with Btrfs 156 157 The `btrfs` storage driver only operates on a Docker host where `/var/lib/docker` is mounted as a Btrfs filesystem. The following procedure shows how to configure Btrfs on Ubuntu 14.04 LTS. 158 159 ### Prerequisites 160 161 If you have already used the Docker daemon on your Docker host and have images you want to keep, `push` them to Docker Hub or your private Docker Trusted Registry before attempting this procedure. 162 163 Stop the Docker daemon. Then, ensure that you have a spare block device at `/dev/xvdb`. The device identifier may be different in your environment and you should substitute your own values throughout the procedure. 164 165 The procedure also assumes your kernel has the appropriate Btrfs modules loaded. To verify this, use the following command: 166 167 ```bash 168 $ cat /proc/filesystems | grep btrfs` 169 ``` 170 171 ### Configure Btrfs on Ubuntu 14.04 LTS 172 173 Assuming your system meets the prerequisites, do the following: 174 175 1. Install the "btrfs-tools" package. 176 177 $ sudo apt-get install btrfs-tools 178 Reading package lists... Done 179 Building dependency tree 180 <output truncated> 181 182 2. Create the Btrfs storage pool. 183 184 Btrfs storage pools are created with the `mkfs.btrfs` command. Passing multiple devices to the `mkfs.btrfs` command creates a pool across all of those devices. Here you create a pool with a single device at `/dev/xvdb`. 185 186 $ sudo mkfs.btrfs -f /dev/xvdb 187 WARNING! - Btrfs v3.12 IS EXPERIMENTAL 188 WARNING! - see http://btrfs.wiki.kernel.org before using 189 190 Turning ON incompat feature 'extref': increased hardlink limit per file to 65536 191 fs created label (null) on /dev/xvdb 192 nodesize 16384 leafsize 16384 sectorsize 4096 size 4.00GiB 193 Btrfs v3.12 194 195 Be sure to substitute `/dev/xvdb` with the appropriate device(s) on your 196 system. 197 198 > **Warning**: Take note of the warning about Btrfs being experimental. As 199 noted earlier, Btrfs is not currently recommended for production deployments 200 unless you already have extensive experience. 201 202 3. If it does not already exist, create a directory for the Docker host's local storage area at `/var/lib/docker`. 203 204 $ sudo mkdir /var/lib/docker 205 206 4. Configure the system to automatically mount the Btrfs filesystem each time the system boots. 207 208 a. Obtain the Btrfs filesystem's UUID. 209 210 $ sudo blkid /dev/xvdb 211 /dev/xvdb: UUID="a0ed851e-158b-4120-8416-c9b072c8cf47" UUID_SUB="c3927a64-4454-4eef-95c2-a7d44ac0cf27" TYPE="btrfs" 212 213 b. Create a `/etc/fstab` entry to automatically mount `/var/lib/docker` each time the system boots. 214 215 /dev/xvdb /var/lib/docker btrfs defaults 0 0 216 UUID="a0ed851e-158b-4120-8416-c9b072c8cf47" /var/lib/docker btrfs defaults 0 0 217 218 5. Mount the new filesystem and verify the operation. 219 220 $ sudo mount -a 221 $ mount 222 /dev/xvda1 on / type ext4 (rw,discard) 223 <output truncated> 224 /dev/xvdb on /var/lib/docker type btrfs (rw) 225 226 The last line in the output above shows the `/dev/xvdb` mounted at `/var/lib/docker` as Btrfs. 227 228 229 Now that you have a Btrfs filesystem mounted at `/var/lib/docker`, the daemon should automatically load with the `btrfs` storage driver. 230 231 1. Start the Docker daemon. 232 233 $ sudo service docker start 234 docker start/running, process 2315 235 236 The procedure for starting the Docker daemon may differ depending on the 237 Linux distribution you are using. 238 239 You can start the Docker daemon with the `btrfs` storage driver by passing 240 the `--storage-driver=btrfs` flag to the `docker daemon` command or you can 241 add the `DOCKER_OPTS` line to the Docker config file. 242 243 2. Verify the storage driver with the `docker info` command. 244 245 $ sudo docker info 246 Containers: 0 247 Images: 0 248 Storage Driver: btrfs 249 [...] 250 251 Your Docker host is now configured to use the `btrfs` storage driver. 252 253 ## Btrfs and Docker performance 254 255 There are several factors that influence Docker's performance under the `btrfs` storage driver. 256 257 - **Page caching**. Btrfs does not support page cache sharing. This means that *n* containers accessing the same file require *n* copies to be cached. As a result, the `btrfs` driver may not be the best choice for PaaS and other high density container use cases. 258 259 - **Small writes**. Containers performing lots of small writes (including Docker hosts that start and stop many containers) can lead to poor use of Btrfs chunks. This can ultimately lead to out-of-space conditions on your Docker host and stop it working. This is currently a major drawback to using current versions of Btrfs. 260 261 If you use the `btrfs` storage driver, closely monitor the free space on your Btrfs filesystem using the `btrfs filesys show` command. Do not trust the output of normal Unix commands such as `df`; always use the Btrfs native commands. 262 263 - **Sequential writes**. Btrfs writes data to disk via journaling technique. This can impact sequential writes, where performance can be up to half. 264 265 - **Fragmentation**. Fragmentation is a natural byproduct of copy-on-write filesystems like Btrfs. Many small random writes can compound this issue. It can manifest as CPU spikes on Docker hosts using SSD media and head thrashing on Docker hosts using spinning media. Both of these result in poor performance. 266 267 Recent versions of Btrfs allow you to specify `autodefrag` as a mount option. This mode attempts to detect random writes and defragment them. You should perform your own tests before enabling this option on your Docker hosts. Some tests have shown this option has a negative performance impact on Docker hosts performing lots of small writes (including systems that start and stop many containers). 268 269 - **Solid State Devices (SSD)**. Btrfs has native optimizations for SSD media. To enable these, mount with the `-o ssd` mount option. These optimizations include enhanced SSD write performance by avoiding things like *seek optimizations* that have no use on SSD media. 270 271 Btfs also supports the TRIM/Discard primitives. However, mounting with the `-o discard` mount option can cause performance issues. Therefore, it is recommended you perform your own tests before using this option. 272 273 - **Use Data Volumes**. Data volumes provide the best and most predictable performance. This is because they bypass the storage driver and do not incur any of the potential overheads introduced by thin provisioning and copy-on-write. For this reason, you may want to place heavy write workloads on data volumes. 274 275 ## Related Information 276 277 * [Understand images, containers, and storage drivers](imagesandcontainers.md) 278 * [Select a storage driver](selectadriver.md) 279 * [AUFS storage driver in practice](aufs-driver.md) 280 * [Device Mapper storage driver in practice](device-mapper-driver.md)